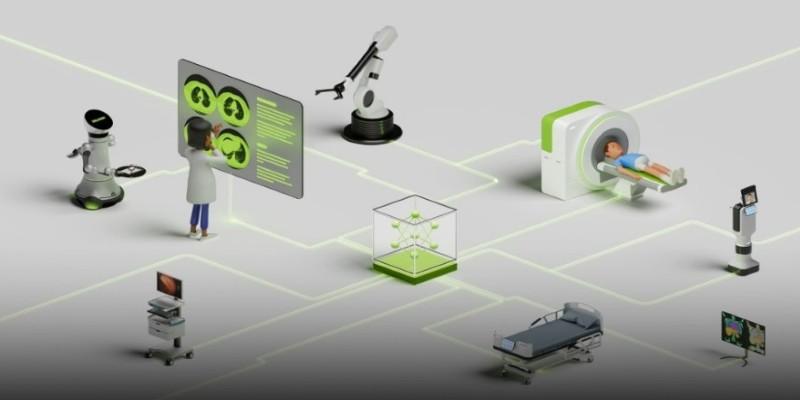

At GTC 2025, Nvidia didn't just talk about speeding up neural networks or scaling GPUs. It put a spotlight on something far more grounded: your next hospital visit. Healthcare isn't new to Nvidia, but this year's announcements were sharper, more physical. Medical scans are about to change. Robotic arms and AI-driven decision engines are transitioning from lab demos to practical tools that read X-rays, guide ultrasound wands, and suggest next steps—all in real-time.

Nvidia's keynote gave healthcare workers and hospitals a closer look at how it plans to combine its chips, its software, and now even its robotic partners to make diagnostics faster, more accurate, and less dependent on overworked radiologists. This isn’t a rebranding of existing hardware. Nvidia is fusing AI with robotics to read, react, and move—right at the bedside.

From GPU to ICU: Why Healthcare Needs AI + Robotics?

Hospitals are data-heavy places, but most of that data still waits in silos, seen only after something goes wrong. Nvidia's idea is to use AI to get ahead of that timeline. Medical imaging—such as MRIs, CT scans, X-rays, and ultrasounds—generates enormous amounts of visual data that often require hours to interpret and analyze. With Nvidia's new hardware-software stacks, much of that interpretation could be instant.

One of the key platforms unveiled was an update to Clara Holoscan, Nvidia’s edge AI computing system tailored for surgical and diagnostic tools. But the real shift wasn’t just the processing—it was the physical presence. Nvidia, working with robotic system manufacturers, demoed robot-guided scanning systems powered by Clara and Jetson. These units don’t replace human operators but assist them by aligning scanners precisely and suggesting scan angles based on learned imaging patterns.

In short, it’s not just about training AI models to read lung shadows. It’s about letting AI and robots help generate the scan itself more intelligently. This, Nvidia claims, reduces rescans, speeds up workflows, and provides rural hospitals with the tools typically found in well-funded research clinics.

This is part of a broader trend where robotics and machine learning work side by side. At GTC, speakers repeatedly used the term "agentic AI," referring to AI systems that don't just calculate but can act. In medical imaging, that means tools that can adjust mid-procedure or alert staff to early signs without waiting for batch processing.

What Nvidia Is Actually Building (And Why It’s Not Hype)?

GTC 2025 didn't shy away from specifics. One standout demo featured an AI-guided robotic ultrasound platform. Here, Nvidia's Jetson Orin modules handled real-time video processing, comparing the live feed to thousands of anonymized scans and flagging anomalies on the operator's screen as the scan occurred. It didn't diagnose, but it did highlight areas to revisit—something radiologists often wish they had time to do.

Nvidia’s updated Clara Guardian suite, already used in hospital monitoring and AI diagnostics, now extends into mobile robotics. Small autonomous carts equipped with sensors and Clara-based inference systems were shown scanning patient QR tags, confirming treatment routines, and relaying data to backend EMRs with little human input. This may sound like warehouse logistics, but in overburdened clinics where staff juggle dozens of cases per shift, automating routine checks can mean better attention to serious patients.

Healthcare AI isn't just about speed. Accuracy matters. One Nvidia presentation referenced studies in which its imaging models, trained with federated learning across multiple hospital systems, matched or exceeded the performance of expert readers in detecting small tumors or vascular anomalies. The keyword there is "federated"—data never leaves the hospitals, which calms privacy concerns while still allowing cross-institutional learning.

Partnerships, Platforms, and Practical Obstacles

Even with the hardware ready, adoption doesn’t happen in a vacuum. Nvidia’s push includes partnerships with hospitals, med-tech firms, and academic researchers. Collaborations with Siemens Healthineers and GE Healthcare are already underway. Some GTC panels included physicians who spoke directly about how AI models can help flag mistakes or reduce false positives in time-sensitive diagnoses like stroke or internal bleeding.

Still, challenges remain. Regulation is one of them. Any device that interacts physically with patients must meet strict safety standards. AI systems that assist rather than replace human readers can be approved more quickly, but still need validation trials. Nvidia seems to favor a hybrid approach: human-in-the-loop systems that build trust gradually while increasing their autonomy.

Another challenge is infrastructure. Not every hospital has the bandwidth or hardware to run advanced AI workloads locally. Nvidia’s response leans on edge devices like Holoscan and Jetson, which don’t require a full data center but still deliver serious inference capabilities. Even rural or mid-size clinics could deploy them, with models updating over time via secure cloud links.

Cost is another hurdle. While Nvidia's tech is state-of-the-art, it's not cheap. However, by highlighting workflow improvements—such as reducing scan times, minimizing manual errors, or enhancing triage—Nvidia is making an economic case to administrators who measure ROI in terms of patient throughput and staff efficiency.

A Future That Moves and Thinks at the Same Time

GTC 2025 showed Nvidia is moving beyond faster chips to systems that see, move, and decide together. Healthcare, with overworked staff, rising demand, and costly delays, stands to benefit greatly. Nvidia aims to support, not replace, doctors with AI that spots missed strokes, robots that steady scanners, and workflows that react in real time. These powerful, practical tools are already here, not just a future promise. Whether they become as standard as a stethoscope will depend on regulation, affordability, infrastructure, and clinician trust. What's clear now is that AI in healthcare is stepping out of the back office and into scan rooms, ERs, and even ambulances—working alongside people to help deliver better, faster care precisely when and where it's needed most.