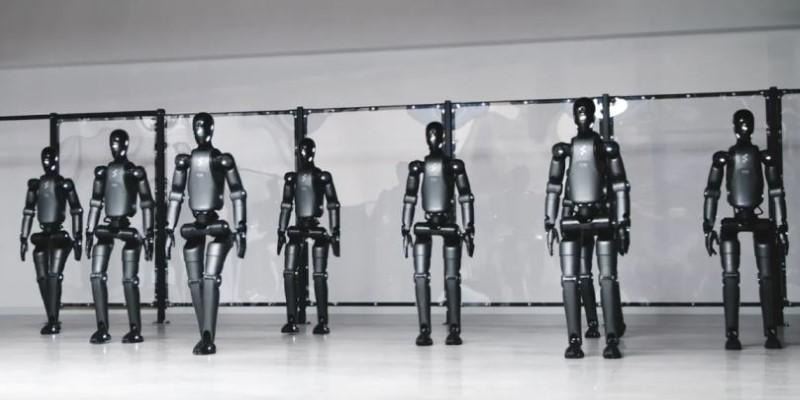

We often think of artificial intelligence as something inseparable from the internet — constantly connected, forever updating, always watching. But Google DeepMind seems to be rewriting that idea. With the quiet confidence of a lab that rarely speaks before it’s ready, they’ve introduced a line of AI-powered robots that can function entirely offline. That’s right — no cloud, no updates, no data stream trickling in the background. And that might just change everything.

This isn't about robots doing backflips or holding full conversations. It's about something far more grounded: autonomy that doesn't rely on connectivity. It's the kind of leap that doesn't scream for attention but instead leans into solving real-world friction — places where internet access is patchy, unreliable, or downright impossible. Think remote farms, disaster zones, or simply warehouses with a terrible signal. Let’s take a closer look at what’s really going on here.

What Makes These Robots Different?

To understand what DeepMind has done, you have to first forget what you assume about AI. Most machine learning systems rely on a constant loop: data goes up to the cloud, a decision is made there, and then the result comes back down. That cycle, even if fast, demands connection.

DeepMind’s approach with these offline robots is different. They’ve managed to compress complex reasoning into local hardware. The training still happens in the lab, of course. But once deployed, these robots don’t need to phone home. They carry the intelligence with them.

Local Models, Not Dumbed Down

Now, offline doesn’t mean watered down. The models inside these robots are still hefty. They’ve been trained with a mixture of reinforcement learning and imitation learning — the kind where the robot doesn’t just memorize what to do, but learns how to think in terms of goal-oriented behavior.

What’s fascinating is how compact these models have become. DeepMind engineers found a way to squeeze down performance without hollowing out capability. That’s not just technical wizardry — it’s practical engineering. And that’s the difference between a cool demo and something that can actually be deployed in the field.

Where It’s Headed — and Why It Matters

The most obvious applications are the ones you might expect: manufacturing, logistics, and agriculture. But it's the edge cases that make the strongest argument. A robot that can harvest fruit in a remote field without needing satellite coverage. A machine that can sort packages in a low-signal warehouse without tripping over every five minutes. A helper that can aid in recovery efforts during a power outage, where the network is down, but the job still needs to be done. And there’s another layer here: privacy.

No Data Leaves the Machine

One unspoken advantage of offline operation is that your data never has to leave the robot. There’s no stream of location logs, no video being uploaded, no behavioral patterns being sent for analysis. For industries bound by confidentiality — like healthcare or high-security manufacturing — this alone is a major win.

It also changes the risk profile. No connection means no live vulnerability. You can’t hack what’s not connected. And for once, that makes AI safer, not riskier.

How Offline Functionality Works — Step by Step

There’s no magic button that turns a connected robot into a fully autonomous one. DeepMind had to rethink the structure of how its AI systems operate. Here’s how it all comes together.

Step 1: Centralized Training on Diverse Tasks

Before anything ever goes into the field, the robots are exposed to huge simulated environments. This includes everything from cluttered rooms to unpredictable terrain. The AI is trained not on specific tasks but on decision-making patterns — how to adapt, how to react, how to anticipate.

Step 2: Model Compression and Optimization

This is where the magic happens. DeepMind’s researchers take the large models trained in the lab and pare them down without sacrificing core performance. Think of it as fitting a jet engine into a suitcase. The resulting models are lean, fast, and efficient enough to run on local hardware without choking.

Step 3: On-Device Control System Deployment

With the optimized AI model ready, it’s loaded directly onto the robot’s onboard systems. Everything it needs — from motion planning to visual recognition — runs right there. There’s no step where the robot needs to check back with the cloud or wait for a signal. It’s all immediate.

Step 4: Edge Testing in Real-World Scenarios

These robots don't go straight from the lab to the market. DeepMind's testing process involves real-world scenarios: warehouses with blocked Wi-Fi, farmland with sketchy reception, even underground tunnels. The goal is to identify edge cases and refine offline stability before any wide-scale rollout.

Why Google Is Relying on This

There's a quiet shift happening in how AI is used. Until now, everything has trended toward more data, more connections, and more centralization. But that's not sustainable for every environment. Google knows this. DeepMind's move into offline functionality isn't just a technical flex — it's a strategic pivot.

When your AI can function independently, you start to think differently about where it can be used. You’re no longer locked into urban infrastructure. You’re not stuck worrying about latency or data costs. And for a company like Google, which already dominates the cloud, making something that doesn’t need the cloud shows a kind of long-term thinking that isn’t just about profit. It’s about resilience.

Final Thoughts

Google DeepMind’s offline AI robots may not look flashy on the surface. They’re not built to impress with smooth-talking dialogue or futuristic movements. But that’s not the point. What DeepMind has done here is practical, thoughtful, and quietly disruptive. They’ve shown that intelligence doesn’t always need to be connected — that sometimes, the smartest machine is the one that can think for itself when no one’s watching. And in a world that’s grown increasingly dependent on constant connection, that kind of independence might just be the next big thing.